OpenAI Buys Python’s Toolchain, GitHub Turns Your Code Into Training Data, and China Embeds AI Into a Billion Conversations

The Developer Stack Is Now the AI Strategy, and Your Tools Are the Battlefield

Every technology wave eventually shifts focus from the technology itself to the underlying infrastructure. That shift happened this week.OpenAI didn’t release a better model; instead, it bought the package manager, linter, and type checker that millions of Python developers use daily. GitHub didn’t make Copilot’s suggestions better; it quietly changed its terms so your code now helps train the next version. Tencent didn’t create a more advanced AI assistant; it added one directly into WeChat as a contact for a billion users.

The model wars are still happening, but they’re no longer the main focus. Now, the real competition is over who controls the environment where your code runs, who can access the data moving through your tools, and which messaging platform becomes the main channel for distributing AI agents at scale. These are battles over infrastructure, not features, and they’re much harder to undo.

At the same time, a supply chain attacker disabled three AI developer security tools in just nine days by using a stolen credential and an AI agent. There’s also a Kubernetes vulnerability that has quietly existed in millions of default installs, and now a single bad Ingress annotation could expose all your cluster’s secrets.

A lot has happened. Here’s what you need to know.

OpenAI Acquires Astral: uv, Ruff, and ty Are Now OpenAI Property

Announced March 19, 2026

OpenAI has agreed to acquire Astral, the company behind uv, Ruff, and ty, three tools that have quietly become load-bearing infrastructure for Python development worldwide. uv is the package and environment manager that replaced pip for a huge portion of the Python community. Ruff is the linter and formatter that’s taken over from Flake8, Black, and isort combined. ty is the type checker, newer and faster than mypy. All three are written in Rust. All three were MIT-licensed and have seen hundreds of millions of downloads per month. Charlie Marsh, Astral’s founder, is joining OpenAI’s Codex team. Both companies confirmed the tools will stay open source after the close. (OpenAI Blog, Astral Blog)

This is not Astral’s only product. The company was also building pyx, a cloud-hosted Python package registry. That’s now inside OpenAI, too. (Open Source For You)

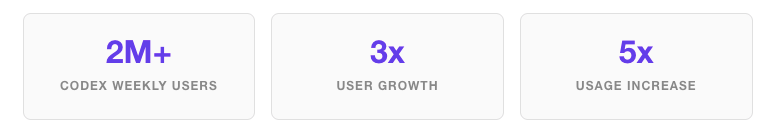

For context: Codex has grown to over 2 million weekly active users since January 2026, that’s a 3x user count increase and 5x usage increase in under four months. OpenAI has also acquired Statsig and Neptune.ai since late 2025. Anthropic purchased JavaScript bundler Bun in December 2025. Both labs are, independently, doing the same thing: buying the toolchain layer. (The Register)

Model quality benchmarks are converging. Developer experience is not. The lab that owns your package manager, your linter, and your type checker controls the feedback loop around agentic coding, uv spins up environments in milliseconds, Ruff validates output before the user sees it, ty catches type errors mid-generation. That’s not a feature; it’s a closed loop. The team that built these tools joins Codex and immediately knows more about how Python agents fail in production than any model training run could tell you.

The trust question is real and worth saying plainly: OpenAI has a thin track record of open-source stewardship. The Python community spent years worrying about Astral being VC-funded. Now the tools are inside the company that generates more AI hype than anyone on earth. The MIT license means a fork is always possible, but forks take energy and community goodwill. Watch how OpenAI handles its first governance decision on these projects; it will tell you a lot about their actual intentions. (Simon Willison’s analysis)

Tencent Drops an AI Agent Into WeChat. One Billion People Can Now Just… Chat With It

Launched March 22, 2026

Tencent rolled out ClawBot, an integration of the open-source OpenClaw AI agent framework, as a native contact inside WeChat. You don’t install anything. You don’t sign up for anything. You add it like you’d add a friend and start sending it tasks. File transfers, email drafting, and task automation it handles them through a standard chat interface. (TechNode, South China Morning Post)

OpenClaw itself was built by Austrian developer Peter Steinberger, which makes it an interesting case: a European open-source project becoming the backbone of a Chinese mega-platform’s agent strategy. Within the same week, Alibaba launched Wukong, Baidu released OpenClaw-compatible tools across desktop, mobile, cloud, and smart home, and Xiaomi and Zhipu AI joined dozens of others building on the same protocol. China’s government has simultaneously issued security warnings about unauthenticated OpenClaw instances and malicious plugins, while provincial governments are actively subsidizing adoption. That combination, official caution plus official funding, is China moving fast and managing the risks in parallel, not sequentially. (Technology.org, Digitimes)

Distribution has always been the hard problem in consumer tech. WeChat solves it completely. Tencent doesn’t need to convince anyone to try a new app, create an account, or change a habit. ClawBot lives where over a billion people already spend hours of their day. The barrier to AI agent adoption in China just collapsed to a QR code scan.

The strategic implication for everyone else building AI agents is direct: whoever controls the primary communication surface controls agent distribution. This is exactly why the EU antitrust action against Meta’s WhatsApp AI restrictions is not just a legal footnote; it’s a fight about whether a single platform can block competing AI agents from reaching WhatsApp’s three billion users the same way Tencent is opening the door on WeChat.

GitHub Will Train Copilot on Your Code Starting April 24, Unless You Opt Out

Announced March 25, 2026

GitHub updated its Privacy Statement and Terms of Service. From April 24, 2026, interaction data from Copilot Free, Pro, and Pro+ users’ inputs, outputs, code snippets, and context will be used to train AI models by default. You have to explicitly opt out at github.com/settings/copilot. Business and Enterprise plan users are not affected. Students and teachers are exempt. Data may be shared with Microsoft affiliates but not third-party AI providers. (GitHub Blog, GitHub Changelog)

GitHub’s justification: Microsoft employee data was used to train a previous Copilot version, and suggestion acceptance rates improved. They want more of that signal. The Register noted immediately that this approach follows US opt-out norms rather than European opt-in requirements, the distinction that GDPR was specifically designed to close.

This is a meaningful shift and worth saying directly: if you’re a freelancer using Copilot Free or Pro across client projects, code you write under NDA may now become training data unless you act in the next 30 days. The same goes for any open-source maintainer whose active sessions involve proprietary context, even in private repos.

The business/enterprise exemption is also a deliberate market signal. Data sovereignty is becoming a tiered product feature, not a default right. Paying more buys you the guarantee that your code isn’t someone else’s training fuel. Expect this framing to spread, Cursor, JetBrains AI, and every other AI coding tool now has a business model template for why enterprise plans cost more.

Cloudflare Puts a Frontier Model on Workers AI and Cuts Token Costs by 77%

Announced March 19, 2026

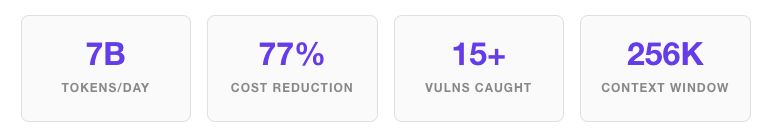

Cloudflare’s Workers AI now supports Kimi K2.5, a frontier-scale open-source model from Moonshot AI with a 256,000-token context window, multi-turn tool calling, vision inputs, and structured outputs. That’s a context window competitive with the best proprietary models, running on Cloudflare’s edge infrastructure. (Cloudflare Blog, Cloudflare Changelog)

Three new platform features landed with the model: prefix caching with discounted pricing for cached tokens; a new x-session-affinity header that routes multi-turn agent requests to the same model instance to maximize cache hit rates; and a redesigned async batch API for background agent tasks that don’t need real-time response.

Cloudflare ran Kimi K2.5 against its own internal code security review agent, processing 7 billion tokens per day, catching 15+ confirmed vulnerabilities in a single codebase, and reporting a 77% lower cost compared to a mid-tier proprietary equivalent. The 77% figure is backed by their own production workload, not a benchmark on a toy dataset. And the features they launched are specifically designed to address the failure modes of agentic systems: session state breaks cache coherence, repeated large system prompts incur full price on every turn, and real-time latency requirements block throughput. This is a company that has clearly been running agents at scale, hit all three walls, and built around them.

If your agents currently send large system prompts on every turn, a very common architecture, evaluate prefix caching on Workers AI against your current stack. The savings math is straightforward, and the edge latency benefits are real for globally distributed workloads.

Microsoft Shows 10 Months of AI Coding Agent Data. The Bottleneck Isn’t What You Think.

Published March 23, 2026

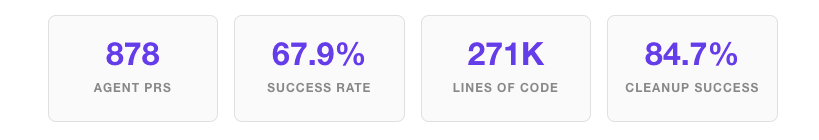

The .NET team released the most detailed production retrospective on AI coding agents to date. Over 10 months of using GitHub’s Copilot Coding Agent across the dotnet/runtime repository, one of the most complex and scrutinized codebases in enterprise open source, the numbers were striking: 878 CCA pull requests in dotnet/runtime, 535 merged, for a 67.9% success rate. Across 7 .NET repositories: 2,963 total CCA PRs, 1,885 merged at 68.6% overall. Roughly 271,000 net lines of code contributed by the agent. The first month’s success rate was 41.7%. Most recent quarter: around 71%. Cleanup and mechanical test coverage succeeded at 84.7%. Performance optimization was the hardest category by far. One maintainer opened 9 PRs from a phone at 35,000 feet during a cross-country flight using only Copilot. (Microsoft .NET Blog)

The headline finding is counterintuitive and important: the bottleneck isn’t code generation, it’s code review. Agent output scales faster than human review can absorb. Teams that deploy coding agents and don’t plan for review bandwidth will actually ship slower, not faster, because the queue of unreviewed agent PRs drags on the whole pipeline.

The success rate climbed from 41% to 71% over 10 months, also telling you something useful: teams get better at using agents, not just because the model improves, but because they learn which tasks to assign. Mechanical, bounded, well-defined work succeeds at high rates. Open-ended architectural or performance work often fails. The skill of “writing good agent tasks” is real and learnable, and it’s currently undertaught.

Three AI Developer Tools Compromised in Nine Days. Your Security Scanner Was the Attack Vector.

Between March 19 and 24, 2026

Between March 19 and 24, 2026, a coordinated campaign attributed to threat actor TeamPCP hit three tools in the AI developer stack in quick succession. (Snyk, Kaspersky)

March 19: Aqua Security’s Trivy GitHub Action, one of the most widely used container vulnerability scanners in CI/CD, had 75 version tags force-pushed by attackers to serve credential-stealing malware. March 23: Checkmarx’s KICS was similarly compromised using the same tag-poisoning technique. March 24: Two versions of LiteLLM, 1.82.7 and 1.82.8, were pushed to PyPI that contained a malicious .pth file. LiteLLM routes calls to OpenAI, Anthropic, Google, and 100+ other LLM providers through a single API. It’s downloaded millions of times daily and sits at the center of most production AI pipelines. The .pth file is executed on every Python process startup, not just when LiteLLM was imported. PyPI quarantined the packages after approximately three hours.

The campaign used stolen maintainer credentials throughout. The targeting was assisted by an autonomous AI agent called “hackerbot-claw”, a documented first for this kind of supply chain attack.

If LiteLLM versions 1.82.7 or 1.82.8 were in any of your environments between roughly 10:39–13:30 UTC on March 24, treat your SSH keys, cloud credentials, and .env files as compromised. Rotate everything. Check for litellm_init.pth in your Python environment.

The structural lesson matters more than any single incident. This campaign went after the security tooling layer — the scanners you use to catch vulnerabilities are now the vulnerability. The fix is mechanical but not automatic: switch from tag pins to commit SHA pins in your Actions, deploy StepSecurity Harden Runner to monitor CI/CD egress, and start generating SBOMs for your AI stack dependencies.

OpenAI Shuts Down Sora, Starts Collapsing Its Product Surface Into One App

Announced March 24, 2026

OpenAI killed Sora, the app, the API, and video generation inside ChatGPT, in its entirety. The $1 billion Disney partnership, which included licensing rights to 200+ Disney characters, ended with it. Disney’s spokesperson was diplomatic about it. OpenAI’s head of applications told staff the company is “orienting aggressively” toward enterprise. A Q4 2026 IPO is reportedly the forcing function. (CNBC, Variety, Hollywood Reporter)

In the same week, OpenAI announced plans to merge its standalone web browser, ChatGPT desktop app, and Codex coding agent into a single desktop superapp. The Instant Checkout shopping feature was also discontinued.

Sora reportedly consumed enormous computing resources, generated no enterprise revenue, and carried significant legal exposure from deepfakes and copyright. Shutting it down is not a retreat; it’s financial discipline ahead of what appears to be an IPO preparation cycle. The Astral acquisition, the app consolidation, the enterprise pivot, these aren’t separate stories. They’re one story about a company that needs to show institutional investors a clean, profitable product surface, not a collection of expensive experiments.

The gap Sora leaves is real. Runway, Pika, and whatever open-source video-generation projects can absorb displaced users will see acceleration. The Codex-into-ChatGPT merger is also worth watching: it puts OpenAI in direct competition with Cursor and Windsurf in the AI coding editor market, backed by a model integration that third parties can’t fully replicate.

Arm Builds Its First Real Chip and Sells It to the Hyperscalers

Announced March 24, 2026

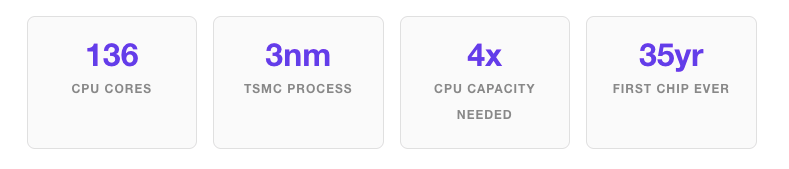

Arm Holdings, a company that has spent its entire 35-year existence licensing chip designs but never selling silicon, launched the AGI CPU: a 136-core data center processor it designed, fabricated, and is now selling as a finished product. This is a genuinely new thing for Arm. Meta co-developed it to run alongside Meta’s custom MTIA accelerators. The customer list, OpenAI, Cerebras, Cloudflare, AWS, Google, Nvidia, Samsung, SK Telecom, reads like a who’s who of the people most invested in an alternative to x86. Systems from Lenovo, Supermicro, Quanta, and ASRock Rack are shipping now. Broader availability comes in H2 2026. The chips are made on TSMC’s 3-nanometer process. (Arm Newsroom, Tom’s Hardware, Technology.org)

Arm just became a hardware combatant in the market it’s been the supplier to. The strategic driver is agentic AI: GPU farms were designed for batch inference and training. Agents are persistent, stateful, coordination-heavy workloads — that’s CPU territory. Arm claims data centers will need more than 4x their current CPU capacity per gigawatt as agent workloads scale. AMD is already reporting 6-month CPU delivery lead times. Intel says its inventory is at its lowest level in years. The CPU is having a moment, and Arm has just positioned itself at its center.

For developers building agentic systems, Arm-native toolchains, container images, and deployment pipelines are not future concerns. They’re a current procurement reality. If your production containers are x86-only, add Arm targets to your build pipeline now.

White House Releases Its AI Legislative Framework. The Key Word Is “Preemption.”

Released March 20, 2026

The Trump administration published a four-page National Policy Framework for Artificial Intelligence — a set of legislative recommendations to Congress covering child safety, data center energy costs, intellectual property, free speech, workforce impacts, innovation, and federal preemption of state AI laws. The framework does not create a new federal AI regulator. It proposes routing oversight through existing agencies: FTC, FCC, and SEC, depending on the domain. It leaves questions about AI copyright training explicitly to the courts. It requires tech companies to cover data center energy costs rather than passing them to ratepayers. (Crowell & Moring, WilmerHale, Roll Call)

If Congress passes a federal AI law with state preemption, the 50-state compliance patchwork that AI SaaS companies have been bracing for since 2024 collapses into a single federal standard, probably a lighter one, given the administration’s posture. That would be a genuine relief for smaller companies that can’t afford 50 separate compliance programs.

The problem is that this exact proposal has failed in the current Congress twice before. Don’t build your compliance roadmap around it passing. Colorado’s June 30 deadline is real and coming fast. California is enforcing now. If your product uses AI in HR decisions, credit scoring, healthcare triage, or educational assessment, and you sell to US customers in those states, that’s your operational timeline, not the Washington legislative calendar. (Mondaq)

Market Mood & Trend Pulse

Hiring Signal

Demand for AI-native engineers who can design multi-agent architectures, manage context windows at scale, and evaluate LLM output quality remains elevated with little supply pressure. Generalist “prompt engineer” roles are being quietly deprioritized, as every developer is now expected to have basic LLM-interaction competency. Supply chain security engineers are the hottest specialist sub-category this week, directly following the TeamPCP campaign. An emerging role gaining traction: “AI task designer”, the person who knows which tasks to assign to coding agents, how to scope them for high success rates, and how to build review workflows around agent output. The Microsoft data proved this skill has measurable commercial value. It barely existed as a job description 12 months ago.

VC Risk Appetite

Cybersecurity remains the most institutionally consistent AI investment category. Consumer AI app investment, video generation, social AI, and creative consumer tools have cooled sharply after Sora’s failure. Venture money is concentrating on AI infrastructure, developer identity, agentic workflow platforms, and toolchain security. The OpenAI/Astral acquisition also signals that strategic corporate buyers are moving faster than VCs to identify high-value developer tooling assets.

Dominant Developer Narrative

Three threads are consolidating simultaneously heading into Q2. The first “agents, not chatbots” is now the consensus. The productive AI-assisted development model is autonomous workflows, not conversational exchanges. The second, intensifying sharply after TeamPCP: “trust nothing you didn’t pin.” Package provenance, commit-SHA discipline, and supply chain hygiene are moving from security-specialist territory to standard developer practice. The third, emerging this week after the GitHub policy announcement: “your tools are training on you.” Tool selection now carries a data sovereignty dimension it didn’t have six months ago, and developers are starting to factor it into stack decisions.

Originally published at https://www.webdevstory.com on March 27, 2026.